Was Einstein Right?

Essay 1: Uncertainty about Uncertainty

Albert Einstein was the greatest scientific genius of modern times. In the space of a single year, before he even had a PhD, he published four papers which established the atomic theory, quantum theory, the special theory of relativity and the ground breaking equation, E=mc2. He was then 26. By 34 he had a professorship in Berlin and was the youngest member of the prestigious Prussian Academy of Science. Then at 36, with the publication of his General theory of Relativity he appeared to have toppled Newton. When his theory seemed to be vindicated by the Royal Society, in 1919, he became the most celebrated scientist in the world. At 40 he was awarded the 1921 Nobel Prize in Physics. But in the late 1920’s as he approached 50 he plummeted from the pinnacle of physics. He was no longer taken seriously by professional physicists. They regarded him as little more than a remarkable oddity and as he advanced into the last quarter century of his life, despite his tireless work on field theory, he was disregarded as a reactionary old man who was well past his prime. What happened?

In 1927 a young German theorist, Werner Heisenberg, commandeered quantum theory when he launched a new system called quantum mechanics in the publication of his now famous principle of uncertainty. It became the fulcrum of physics but Einstein despised it. He described it as “...a real witches calculus...most ingenious, and adequately protected by its great complexity against being proved wrong.” 1

Albert Einstein was an outspoken critic of uncertainty in quantum theory. That cost him his lofty position and reputation in physics. He was no longer driving the quantum bandwagon he had set in motion. A Dane had seized the reins then handed them over to a young Hun who hurtled off in an uncertain direction with a haggle of headstrong Turks behind him leaving Einstein in a ditch. The new generation of physicists were not listening to him. In the abandon of youth they were deaf to his pleas for common sense.

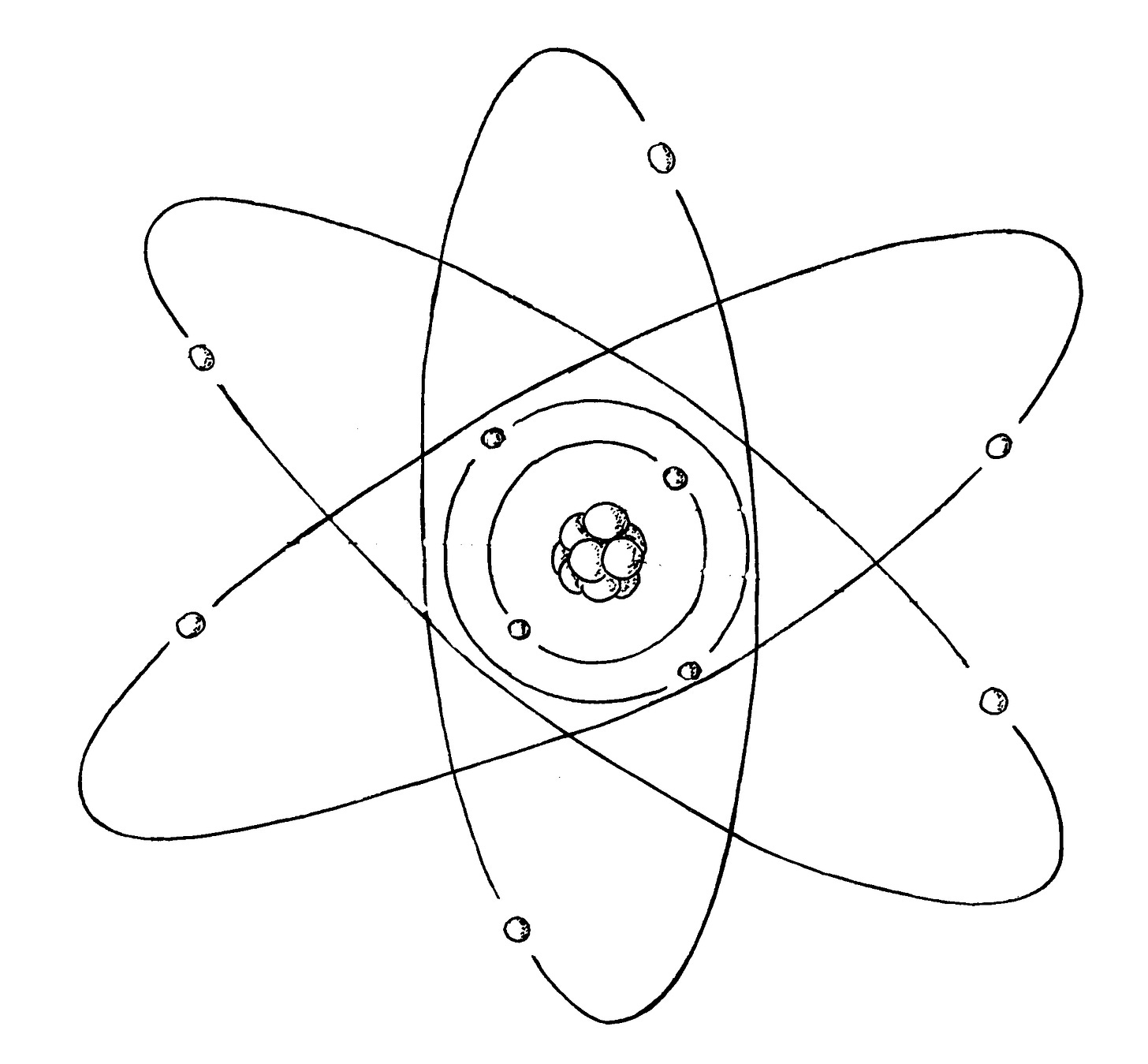

In England, meanwhile, an intrepid New Zealander, Ernest Rutherford, had been probing the atom with the mysterious rays of radioactivity. The old professor at Cambridge, Sir J. J. Thompson had discovered the electron in 1897 and in 1911 Rutherford had uncovered a central nucleus in the atom around which the electrons orbited.

Then in 1913 a 28 year old Danish theorist, Neils Bohr made his name by applying Einstein’s quantum theory to the orbiting electron in a hydrogen atom. He determined electrons exist in atoms in certain quantum states and that they can take a quantum leap between these states as they emit or absorb energy. This is the atom as we know it today.

For his momentous achievement Bohr was awarded the 1922 Nobel Prize. In 1916, after he had been appointed professor of physics in Copenhagen Bohr determined to pull the epicentre of quantum physics to Denmark. He began to recruit brains. By 1920 he had assembled a team of brilliant young minds for his new Copenhagen Institute of Theoretical Physics, which formally opened in 1921. Werner Heisenberg was the rising star among them.

Determined to make his mark in physics the young Heisenberg needed a bright idea. It came to him one evening in 1922. He was in a park pondering on the quantum leap of electrons in atoms. A lamp lighter was lighting the gas street lamps and as the shades of night drew in Heisenberg noticed a man walking along the path underneath them. He could only see the man when he walked into the light of a lamp. When he continued into the dark between the lamps he disappeared. Heisenberg realised he could only be certain of the man’s existence when he interacted with the light of a lamp. In the dark, when the man was between the lamps Werner was no longer certain he was there. He concluded that likewise, as an observer, he could only be certain of an electron in the atom when it was absorbing or emitting light otherwise he could not be certain of its existence. That was how the concept of uncertainty crept into the world of quantum physics. It would lead eventually to the Copenhagen Interpretation of Quantum Reality that concluded we can only be certain that things exist when they are observed.

For most of us it would appear obvious that if the Universe has been around for 13.5 billion years and Homo sapiens appeared within it only 190,000 years ago it must have existed before us; we are, after all, made of its ancient atoms. That common sense is called realism. But the Copenhagen crew would dismiss our realism as naïve. They would argue we could only presume that the Universe existed before we came into existence. We could not be certain because we were not there to observe it. Like the man in the dark walking between the gas lamps, even if it existed the Universe was in the dark of not yet being observed, at least by humans. Common sense doesn’t apply in quantum mechanics. Even Einstein couldn’t succeed in bringing the common sense of realism into it. In 1964 a physicist from CERN, John S. Bell, thought he had won the argument for Einstein in his now famous Bell’s Theorem but when that failed he despaired of quantum mechanics saying, “I hesitated to say it was wrong but I knew it was rotten.” 2

Meanwhile, back in Britain, in the year Einstein became an international celebrity, Rutherford had discovered a positively charged particle in the atomic nucleus which he called a proton. It was 1836x as massive as a negatively charged electron. From his study of radioactive decay Rutherford predicted the existence of a neutral particle in the nucleus, which he named a neutron, formed from an electron combined with a proton as a result of their mutual charge attraction. In the process of radioactivity, he said the neutron decayed back into an electron and a proton. Rutherford’s maxim was, “These fundamental things have got to be simple” so he surmised the neutron would appear to be neutral due to the opposite charges of its composite electron and proton neutralising each other. In his mind the neutron only existed as an engagement of an electron and a proton.

One of Rutherford’s students, James Chadwick, sallied forth on a hunt for the neutron and refining some of Rutherford’s research techniques, he discovered it in 1932. He was awarded the 1935 Nobel Prize in physics for this achievement. The profile of the neutron fitted Rutherford’s prediction. Its mass was about 0.1% greater than a proton. This corresponded to slightly more than the sum of the mass of a proton and an electron. In a process called K-capture neutrons could be formed from an electron captured by a proton in an atomic nucleus and when neutrons decayed in a radioactive nucleus, in a process called Beta-decay, they released a proton and an electron. For all intents and purposes the neutron appeared to be a bound state of an electron and a proton just as Rutherford had proposed,.

Werner Heisenberg was quick to object. He proclaimed the neutron could not be a bound state of an electron and proton. It had to be a unique neutral particle. Why was it necessary for him to suggest that when there was clear experimental evidence supporting the bound state model? Why did the entire world of physics snap into step behind him?

Werner Heisenberg had launched a system of quantum mechanics based on a hunch that the observer effect might apply at a quantum level. The observer effect is that measurements of certain systems cannot be made without the act of observation affecting them. Heisenberg determined that the process of making certain measurements in the subatomic world would increase the uncertainty about what was going on there. For example, if you wanted to look at a subatomic particle in order to ascertain its position you would reflect a quantum of electromagnetic radiation off it. But the act of bouncing a quantum of energy off a subatomic particle would give it a kick which would increase its momentum and that would make its position more uncertain. Looking at small objects requires more energy than is required for looking at large ones. This is evident in the electron microscope, which employs higher frequency radiation than an optic microscope. Because subatomic particles are the smallest things in nature the action of ascertaining their position with any degree of certainty would require the use of very large amounts of energy, which would give them an enormous kick. On the basis of this Heisenberg argued against physicists being able to determine with certainty both the position and momentum of a subatomic particle. He called this quantum indeterminacy.

Heisenberg included his ideas of quantum indeterminacy with other features of quantum theory including wave-particle duality determined by Louis de Broglie and wave indeterminacy proposed by Erwin Schrödinger. Together they were incorporated into a formula known as the Heisenberg uncertainty principle, which was central to the Copenhagen Institute’s development of quantum mechanics from 1927 onwards. However, when Heisenberg applied his formula to the Rutherford-Chadwick neutron as an electron locked onto a proton, it failed. The high degree of certainty of an electron in a neutron suggested such an enormous indeterminacy in its momentum that electrons could be racing round on the surface of a proton with velocities up to 99.97% of the speed of light. Einstein’s theory of relativity determined that a moving particle is more massive than a particle at rest. At 99.97% of the velocity of light electrons would possess forty times the mass of an electron at rest. The wide range of electron velocities predicted by the uncertainty principle would be reflected in a measurable range of mass values for neutrons, from that of a single electron and proton to that of a proton and forty electrons. In fact all neutrons have precisely the same mass of 1674.92 x 10-30 kg. The neutron provided an experimental test that proved the uncertainty principle was wrong.

Heisenberg was not willing to accept that his uncertainty principle had been disproved so he insisted that neutrons are not electrons bound to protons. In defiance of the rules of science he disregarded the evidence presented by the neutron in order to protect his theory and the physics community closed ranks around him because by 1932, when the neutron was discovered, quantum mechanics had gained so much momentum that physicists were reluctant to accept uncertainty about the uncertainty principle underpinning it. They decided to ignore the evidence about the neutron until they could circumvent it. This became possible the 1960s with the invention of quark theory.

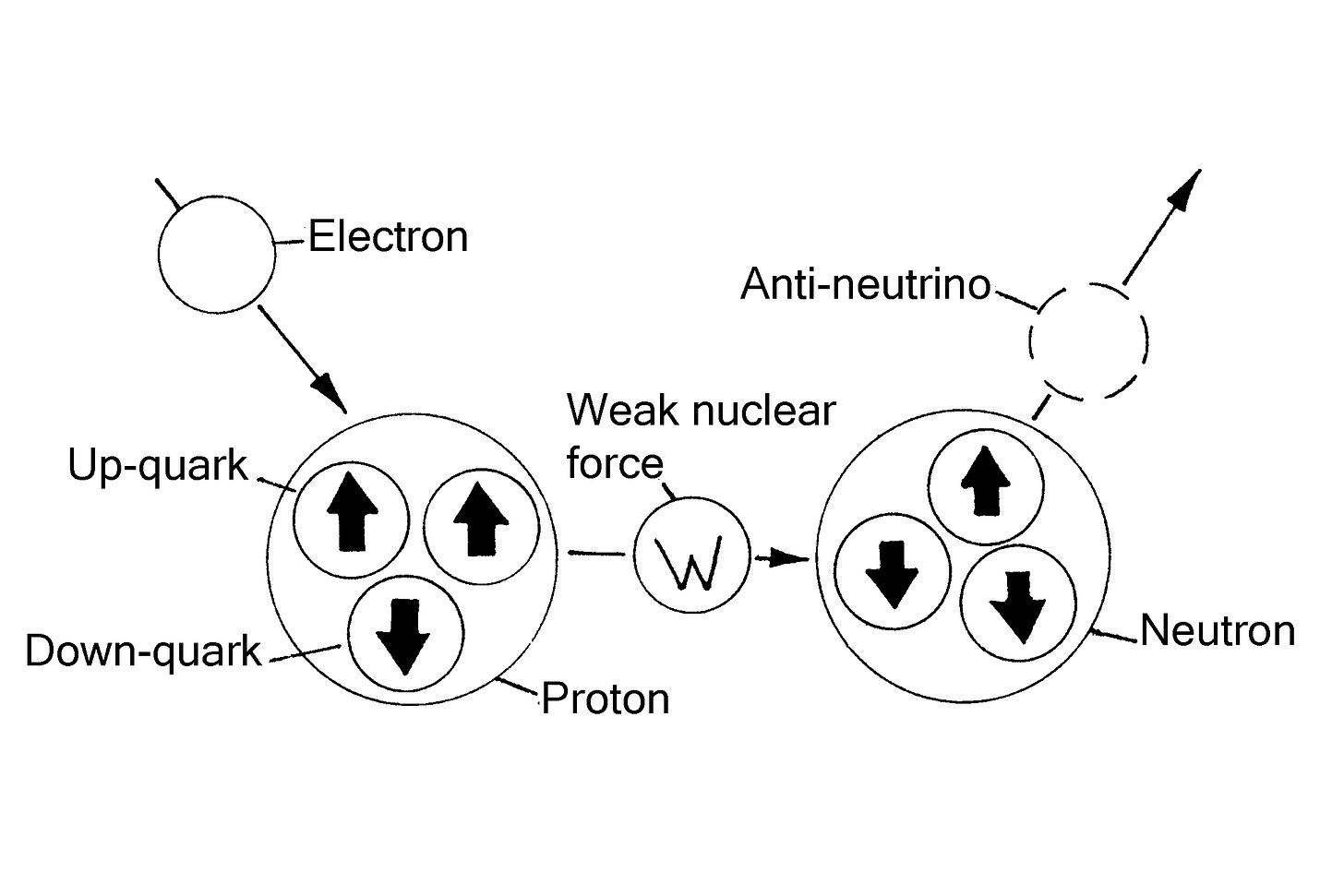

In Einstein’s mother tongue quark is slang for nonsense and quark theory lived up to its name. Quarks were fabricated subatomic particles attributed with fractional charges when fractional charges have never been observed in nature. For example, in quark theory a proton was supposedly made up of two up-quarks with ⅔ charge and one down-quark with ⅓ charge. These added up to unitary charge. The neutron was then supposed to be made up of two down-quarks with ⅓ charge and one up-quark ⅔ charge which added up to zero charge.

According to quark theory, in a process worthy of alchemy, when an electron interacts with a proton a weak nuclear force comes into play which transforms an up-quark into a down-quark and the electron is temporarily lost. In this process the electron and proton supposedly cease to be and their place is taken by a neutron and an anti-neutrino. When a neutron decays the down quark reverts to an up quark and the proton reappears with the disappearance of the anti-neutrino and appearance of an electron with a neutrino, which carries away some of the electron’s energy. These fundamental things were certainly not simple.

The quark account for neutrons reminds me of the story of a mad professor of puddings:

Once upon a time in a crazy world there was a mad professor of puddings. A student in his department of nutrition baked plums in a pudding then ran round shouting, “Eureka, I have just invented plum pudding!”

The professor was not impressed. He snorted over his spectacles, “That is not a plum pudding. That is a Black forest gateau.”

The downcast student argued, “But I mixed plums into my pudding before I baked it and when I weighed it, the weight was that of the plums and the pud and at the end, when I shook the pudding, out came a plum. It has to be a plum pudding!”

The professor became really angry. “You stupid student, have you learnt nothing of what I have taught you? When you bake plums in a pudding the ingredients change their identity. Due to the interaction of a weak cooking force, the plums change into cherries and pudding becomes a Black forest gateau.”

“Well how do you explain the plum that fell out of the pudding?” retorted the student; “There are no plums in a Black forest gateau!”

“The gateau is unstable,” barked the professor, “after a few minutes it falls apart and as it does so the cherries revert to plums.”

How could the student argue? He was speaking to none other than the President of the Royal Society of Puddings. If he wanted to make it in his world and get a good career in the cake and pudding industry, he had to accept that plums backed in a pudding turn into cherries and the end result is a Black forest gateau…

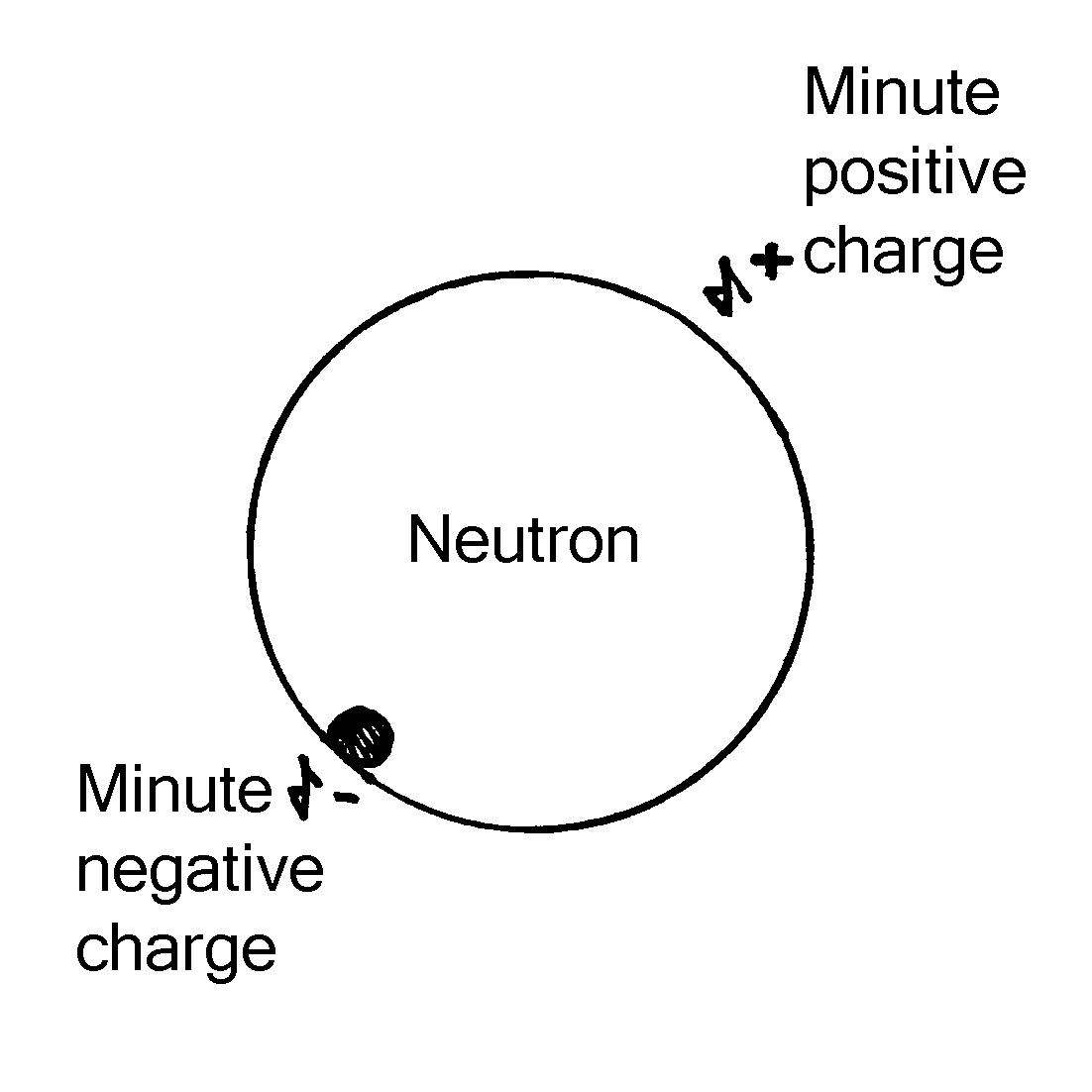

Experimental physics suggests that a neutron is not a truly neutral particle. The evidence is it does have charge but the effect of its charge has been cancelled by the coexistence of opposites. This occurs in atoms formed of charged particles. They do not display charge because they contain an equal number of opposite charges.

The neutron has magnetism. It has a magnetic moment of 1.91 nuclear magnetrons. 3 A particle cannot have a magnetic moment unless it has charge because the magnetic moment of a particle is created by the spin of its charge.

Then there is the issue of particle masses. If a neutron were a truly neutral particle it should be lighter, not heavier than a neutron. In Quarks the Stuff of Matter,6 Harald Fritzsch raised this issue when he wrote:

We do not understand why the neutron is heavier than the proton. Indeed, an unbiased physicist would have to assume the opposite by the following logic. It is reasonable to think that the difference in mass between the proton and neutron is related to electromagnetic interaction since the proton has an electric field and the neutron does not. If we rob the proton of its charge, we would expect the neutron and the proton to have the same mass. The proton is therefore logically expected to be heavier than the neutron by an amount corresponding to the energy needed to create the electric field around it.

…meanwhile, in the crazy world all was not going well for the mad professor of puddings. Another student baked plums in a pudding then licked it. He tasted a plum and so established that the plums did not become cherries when baked in a pudding. The mad professor was very upset by the arrival of that awkward fact. He ranted and raved shouting that licking introduced too great a margin of error to be treated as a valid measure so the observation should be dismissed altogether.

The view of the neutron as a totally chargeless particle was challenged in 1957 4 by an experiment which revealed a very weak electric dipole on a neutron. On one spot the neutron displayed a minute negative charge - around a billion, trillion times weaker than that of an electron. This appeared to support the idea that the neutron is a bound state of opposite charges, which mostly cancel each other out rather than an entirely chargeless particle.

Physicists were quick to dismiss the discovery of an electric dipole on the neutron on the grounds that the measure of electric charge in the experiment was too weak to be taken as conclusive. In his textbook, Nuclei and Particles 5, Emilio Segré pointed out that the electric dipole moment of the neutron – equal to the charge on an electron x10-20 - is so minute compared to the margin of error - 0.1 +/- 2.4 - that “this moment could be exactly zero, in agreement with the theory.”

The electric dipole of a neutron would be expected to be very weak because if the neutron were an electron bound to a proton their opposite charges would all but cancel each other out but the measure should not have been treated as exactly zero. Though the margin of error indicated the measure was too weak to be conclusive it did not suggest there was no measure at all. The way Segré wrote about the result of that experiment was suggestive that he really wanted to dismiss the experiment because it revealed an awkward fact that needed to be totally discounted in order to protect ‘the theory’.

Physicists argued against the bound state theory because the neutron displays the same value of quantum spin as a proton. That is to be expected. An electron could not be spinning if it is locked onto to a proton so only the quantum spin of the proton would be apparent. The quantum spin of the electron would be suspended not lost because an electron leaving a proton in beta decay has the same quantum spin as an electron joining it in K-capture so angular momentum would be conserved in the overall process of formation and decay of neutrons.

The physicist and Nobel laureate Richard Feynman made this clear when he said, “If your theories and mathematics do not match up to the experiments then they are wrong.” 7 Science is evidence based. In the history and philosophy of science it is unacceptable to disregard evidence in order to protect theories. When that occurs science is lost because its single most important rule is that theories should be ditched if discoveries or experiments prove they are wrong.

My father Dr Michael Ash said to me “Even if you find yourself standing alone against the entire world it does not mean you are wrong.” Albert Einstein found himself standing alone against the entire world of physics when he challenged the uncertainty principle of Werner Heisenberg. Do you think he was wrong? Do you think he deserved to be side-lined in physics for his stand against quantum mechanics? The scientific community thought he had lost his marbles. Do you agree with them or do you think he was courageously upholding the integrity of science?

1. Matthews R., Unraveling the Mind of God, Virgin Books, 1992

2. Bernstein J., Quantum Profiles, Princetown University Press, 1991

3. Alvarez L. W; Bloch, F. A quantitative determination of the neutron magnetic moment in absolute nuclear magnetrons Physical Review 57: 111–122. 1940.

4. Smith J. H., Purcell E. M., Ramsey N. F., Experimental Limit to the Electric Dipole Moment of the Neutron, Physical Review, 108: 120–122, 1957

5. Segrè E, Nuclei & Particles (1964) Benjamin Inc

6. Fritzsch H, Quarks: The Stuff of Matter, Allen Lane 1983.

7. Calder N. The Key to the Universe: A Report on the New Physics (1977) BBC Publications